-

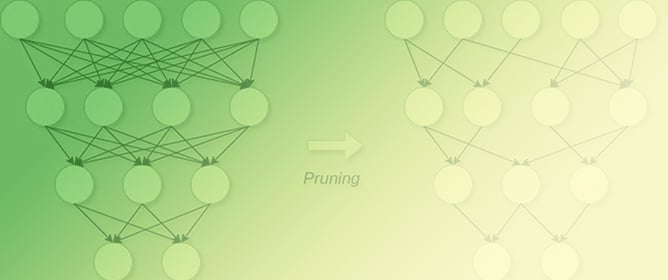

Activation-Based Pruning of Neural Networks

Activation-Based Pruning of Neural Networks -

A Biased-Randomized Discrete Event Algorithm to Improve the Productivity of Automated Storage and Retrieval Systems in the Steel Industry

A Biased-Randomized Discrete Event Algorithm to Improve the Productivity of Automated Storage and Retrieval Systems in the Steel Industry -

Enhancing Cryptocurrency Price Forecasting by Integrating Machine Learning with Social Media and Market Data

Enhancing Cryptocurrency Price Forecasting by Integrating Machine Learning with Social Media and Market Data -

Deep Learning-Based Visual Complexity Analysis of Electroencephalography Time-Frequency Images: Can It Localize the Epileptogenic Zone in the Brain?

Deep Learning-Based Visual Complexity Analysis of Electroencephalography Time-Frequency Images: Can It Localize the Epileptogenic Zone in the Brain?

Journal Description

Algorithms

Algorithms

is a peer-reviewed, open access journal which provides an advanced forum for studies related to algorithms and their applications. Algorithms is published monthly online by MDPI. The European Society for Fuzzy Logic and Technology (EUSFLAT) is affiliated with Algorithms and their members receive discounts on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q2 (Numerical Analysis)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 15 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Testimonials: See what our editors and authors say about Algorithms.

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.3 (2022);

5-Year Impact Factor:

2.2 (2022)

Latest Articles

The Weighted Least-Squares Approach to State Estimation in Linear State Space Models: The Case of Correlated Noise Terms

Algorithms 2024, 17(5), 194; https://doi.org/10.3390/a17050194 (registering DOI) - 04 May 2024

Abstract

In this article, a particular approach to deriving recursive state estimators for linear state space models is generalised, namely the weighted least-squares approach introduced by Duncan and Horn in 1972, for the case of the two noise processes arising in such models being

[...] Read more.

In this article, a particular approach to deriving recursive state estimators for linear state space models is generalised, namely the weighted least-squares approach introduced by Duncan and Horn in 1972, for the case of the two noise processes arising in such models being cross-correlated; in this context, the fact that in the available literature two different non-equivalent recursive algorithms are presented for the task of state estimation in the aforementioned case is discussed. Although the origin of the difference between these two algorithms can easily be identified, the issue has only rarely been discussed so far. Then the situations in which each of the two algorithms apply are explored, and a generalised Kalman filter which represents a merger of the two original algorithms is proposed. While, strictly speaking, optimal state estimates can be obtained only through the non-recursive weighted least-squares approach, in examples of modelling simulated and real-world data, the recursive generalised Kalman filter shows almost as good performance as the optimal non-recursive filter.

Full article

(This article belongs to the Collection Feature Papers in Algorithms for Multidisciplinary Applications)

Open AccessArticle

The Algorithm of Gu and Eisenstat and D-Optimal Design of Experiments

by

Alistair Forbes

Algorithms 2024, 17(5), 193; https://doi.org/10.3390/a17050193 - 02 May 2024

Abstract

This paper addresses the following problem: given m potential observations to determine n parameters,

This paper addresses the following problem: given m potential observations to determine n parameters,

(This article belongs to the Special Issue Numerical Optimization and Algorithms: 2nd Edition)

Open AccessArticle

Encouraging Eco-Innovative Urban Development

by

Victor Alves, Florentino Fdez-Riverola, Jorge Ribeiro, José Neves and Henrique Vicente

Algorithms 2024, 17(5), 192; https://doi.org/10.3390/a17050192 - 01 May 2024

Abstract

This article explores the intertwining connections among artificial intelligence, machine learning, digital transformation, and computational sustainability, detailing how these elements jointly empower citizens within a smart city framework. As technological advancement accelerates, smart cities harness these innovations to improve residents’ quality of life.

[...] Read more.

This article explores the intertwining connections among artificial intelligence, machine learning, digital transformation, and computational sustainability, detailing how these elements jointly empower citizens within a smart city framework. As technological advancement accelerates, smart cities harness these innovations to improve residents’ quality of life. Artificial intelligence and machine learning act as data analysis powerhouses, making urban living more personalized, efficient, and automated, and are pivotal in managing complex urban infrastructures, anticipating societal requirements, and averting potential crises. Digital transformation transforms city operations by weaving digital technology into every facet of urban life, enhancing value delivery to citizens. Computational sustainability, a fundamental goal for smart cities, harnesses artificial intelligence, machine learning, and digital resources to forge more environmentally responsible cities, minimize ecological impact, and nurture sustainable development. The synergy of these technologies empowers residents to make well-informed choices, actively engage in their communities, and adopt sustainable lifestyles. This discussion illuminates the mechanisms and implications of these interconnections for future urban existence, ultimately focusing on empowering citizens in smart cities.

Full article

(This article belongs to the Special Issue Algorithms for Smart Cities)

Open AccessArticle

Mathematical Models for the Single-Channel and Multi-Channel PMU Allocation Problem and Their Solution Algorithms

by

Nikolaos P. Theodorakatos, Rohit Babu, Christos A. Theodoridis and Angelos P. Moschoudis

Algorithms 2024, 17(5), 191; https://doi.org/10.3390/a17050191 - 30 Apr 2024

Abstract

Phasor measurement units (PMUs) are deployed at power grid nodes around the transmission grid, determining precise power system monitoring conditions. In real life, it is not realistic to place a PMU at every power grid node; thus, the lowest PMU number is optimally

[...] Read more.

Phasor measurement units (PMUs) are deployed at power grid nodes around the transmission grid, determining precise power system monitoring conditions. In real life, it is not realistic to place a PMU at every power grid node; thus, the lowest PMU number is optimally selected for the full observation of the entire network. In this study, the PMU placement model is reconsidered, taking into account single- and multi-capacity placement models rather than the well-studied PMU placement model with an unrestricted number of channels. A restricted number of channels per monitoring device is used, instead of supposing that a PMU is able to observe all incident buses through the transmission connectivity lines. The optimization models are declared closely to the power dominating set and minimum edge cover problem in graph theory. These discrete optimization problems are directly related with the minimum set covering problem. Initially, the allocation model is declared as a constrained mixed-integer linear program implemented by mathematical and stochastic algorithms. Then, the integer linear problem is reformulated into a non-convex constraint program to find optimality. The mathematical models are solved either in binary form or in the continuous domain using specialized optimization libraries, and are all implemented in YALMIP software in conjunction with MATLAB. Mixed-integer linear solvers, nonlinear programming solvers, and heuristic algorithms are utilized in the aforementioned software packages to locate the global solution for each instance solved in this application, which considers the transformation of the existing power grids to smart grids.

Full article

(This article belongs to the Special Issue Convex Optimization Methods and Metaheuristic Algorithms for Power Systems Planning, Operation and Control)

Open AccessArticle

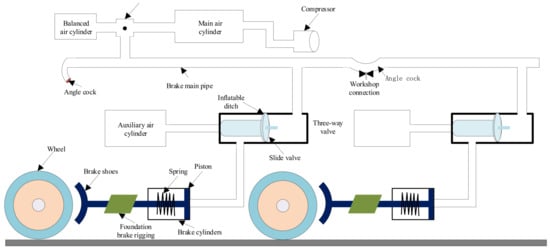

Deep Q-Network Algorithm-Based Cyclic Air Braking Strategy for Heavy-Haul Trains

by

Changfan Zhang, Shuo Zhou, Jing He and Lin Jia

Algorithms 2024, 17(5), 190; https://doi.org/10.3390/a17050190 - 30 Apr 2024

Abstract

Cyclic air braking is a key element for ensuring safe train operation when running on a long and steep downhill railway section. In reality, the cyclic braking performance of a train is affected by its operating environment, speed and air-refilling time. Existing optimization

[...] Read more.

Cyclic air braking is a key element for ensuring safe train operation when running on a long and steep downhill railway section. In reality, the cyclic braking performance of a train is affected by its operating environment, speed and air-refilling time. Existing optimization algorithms have the problem of low learning efficiency. To solve this problem, an intelligent control method based on the deep Q-network (DQN) algorithm for heavy-haul trains running on long and steep downhill railway sections is proposed. Firstly, the environment of heavy-haul train operation is designed by considering the line characteristics, speed limits and constraints of the train pipe’s air-refilling time. Secondly, the control process of heavy-haul trains running on long and steep downhill sections is described as the reinforcement learning (RL) of a Markov decision process. By designing the critical elements of RL, a cyclic braking strategy for heavy-haul trains is established based on the reinforcement learning algorithm. Thirdly, the deep neural network and Q-learning are combined to design a neural network for approximating the action value function so that the algorithm can achieve the optimal action value function faster. Finally, simulation experiments are conducted on the actual track data pertaining to the Shuozhou–Huanghua line in China to compare the performance of the Q-learning algorithm against the DQN algorithm. Our findings revealed that the DQN-based intelligent control strategy decreased the air braking distance by 2.1% and enhanced the overall average speed by more than 7%. These experiments unequivocally demonstrate the efficacy and superiority of the DQN-based intelligent control strategy.

Full article

(This article belongs to the Special Issue Algorithms in Evolutionary Reinforcement Learning)

►▼

Show Figures

Figure 1

Open AccessReview

Insights into Image Understanding: Segmentation Methods for Object Recognition and Scene Classification

by

Sarfaraz Ahmed Mohammed and Anca L. Ralescu

Algorithms 2024, 17(5), 189; https://doi.org/10.3390/a17050189 - 30 Apr 2024

Abstract

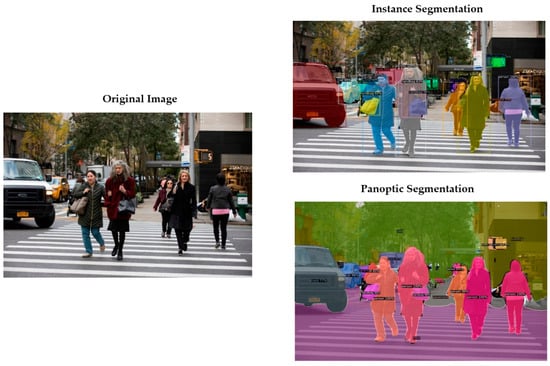

Image understanding plays a pivotal role in various computer vision tasks, such as extraction of essential features from images, object detection, and segmentation. At a higher level of granularity, both semantic and instance segmentation are necessary for fully grasping a scene. In recent

[...] Read more.

Image understanding plays a pivotal role in various computer vision tasks, such as extraction of essential features from images, object detection, and segmentation. At a higher level of granularity, both semantic and instance segmentation are necessary for fully grasping a scene. In recent times, the concept of panoptic segmentation has emerged as a field of study that unifies semantic and instance segmentation. This article sheds light on the pivotal role of panoptic segmentation as a visualization tool for understanding scene components, including object detection, categorization, and precise localization of scene elements. Advancements in achieving panoptic segmentation and suggested improvements to the predicted outputs through a top-down approach are discussed. Furthermore, datasets relevant to both scene recognition and panoptic segmentation are explored to facilitate a comparative analysis. Finally, the article outlines certain promising directions in image recognition and analysis by underlining the ongoing evolution in image understanding methodologies.

Full article

(This article belongs to the Collection Traditional and Machine Learning Methods to Solve Imaging Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

A Data-Driven Approach to Discovering Process Choreography

by

Jaciel David Hernandez-Resendiz, Edgar Tello-Leal and Marcos Sepúlveda

Algorithms 2024, 17(5), 188; https://doi.org/10.3390/a17050188 - 29 Apr 2024

Abstract

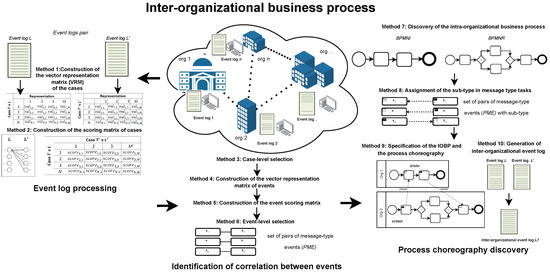

Implementing approaches based on process mining in inter-organizational collaboration environments presents challenges related to the granularity of event logs, the privacy and autonomy of business processes, and the alignment of event data generated in inter-organizational business process (IOBP) execution. Therefore, this paper proposes

[...] Read more.

Implementing approaches based on process mining in inter-organizational collaboration environments presents challenges related to the granularity of event logs, the privacy and autonomy of business processes, and the alignment of event data generated in inter-organizational business process (IOBP) execution. Therefore, this paper proposes a complete and modular data-driven approach that implements natural language processing techniques, text similarity, and process mining techniques (discovery and conformance checking) through a set of methods and formal rules that enable analysis of the data contained in the event logs and the intra-organizational process models of the participants in the collaboration, to identify patterns that allow the discovery of the process choreography. The approach enables merging the event logs of the inter-organizational collaboration participants from the identified message interactions, enabling the automatic construction of an IOBP model. The proposed approach was evaluated using four real-life and two artificial event logs. In discovering the choreography process, average values of 0.86, 0.89, and 0.86 were obtained for relationship precision, relation recall, and relationship F-score metrics. In evaluating the quality of the built IOBP models, values of 0.95 and 1.00 were achieved for the precision and recall metrics, respectively. The performance obtained in the different scenarios is encouraging, demonstrating the ability of the approach to discover the process choreography and the construction of business process models in inter-organizational environments.

Full article

(This article belongs to the Special Issue Data-Driven Intelligent Modeling and Optimization Algorithms for Industrial Processes)

►▼

Show Figures

Figure 1

Open AccessArticle

MMD-MSD: A Multimodal Multisensory Dataset in Support of Research and Technology Development for Musculoskeletal Disorders

by

Valentina Markova, Todor Ganchev, Silvia Filkova and Miroslav Markov

Algorithms 2024, 17(5), 187; https://doi.org/10.3390/a17050187 - 29 Apr 2024

Abstract

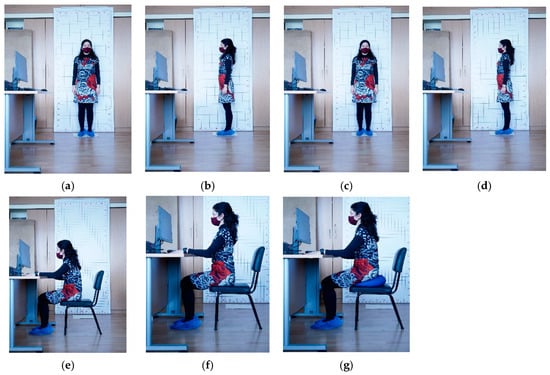

Improper sitting positions are known as the primary reason for back pain and the emergence of musculoskeletal disorders (MSDs) among individuals who spend prolonged time working with computer screens, keyboards, and mice. At the same time, it is well understood that automated technological

[...] Read more.

Improper sitting positions are known as the primary reason for back pain and the emergence of musculoskeletal disorders (MSDs) among individuals who spend prolonged time working with computer screens, keyboards, and mice. At the same time, it is well understood that automated technological tools can play an important role in the process of unhealthy habit alteration, so plenty of research efforts are focused on research and technology development (RTD) activities that aim to provide support for the prevention of back pain or the development of MSDs. Here, we report on creating a new resource in support of RTD activities aiming at the automated detection of improper sitting positions. It consists of multimodal multisensory recordings of 100 persons, made with a video recorder, camera, and wrist-attached sensors that capture physiological signals (PPG, EDA, skin temperature), as well as motion sensors (three-axis accelerometer). Our multimodal multisensory dataset (MMD-MSD) opens new opportunities for modeling the body stance (sitting posture and movements), physiological state (stress level, attention, emotional arousal and valence), and performance (success rate on the Stroop test) of people working with a computer. Finally, we demonstrate two use cases: improper neck posture detection from pictures, and task-specific cognitive load detection from physiological signals.

Full article

(This article belongs to the Section Databases and Data Structures)

►▼

Show Figures

Figure 1

Open AccessArticle

Synesth: Comprehensive Syntenic Reconciliation with Unsampled Lineages

by

Mattéo Delabre and Nadia El-Mabrouk

Algorithms 2024, 17(5), 186; https://doi.org/10.3390/a17050186 - 29 Apr 2024

Abstract

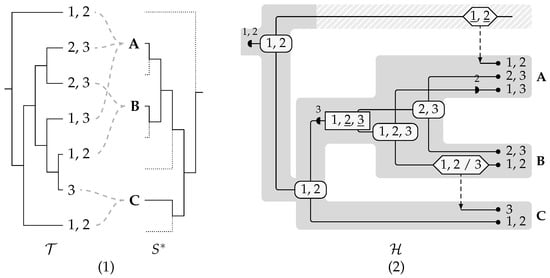

We present Synesth, the most comprehensive and flexible tool for tree reconciliation that allows for events on syntenies (i.e., on sets of multiple genes), including duplications, transfers, fissions, and transient events going through unsampled species. This model allows for building histories that explicate

[...] Read more.

We present Synesth, the most comprehensive and flexible tool for tree reconciliation that allows for events on syntenies (i.e., on sets of multiple genes), including duplications, transfers, fissions, and transient events going through unsampled species. This model allows for building histories that explicate the inconsistencies between a synteny tree and its associated species tree. We examine the combinatorial properties of this extended reconciliation model and study various associated parsimony problems. First, the infinite set of explicatory histories is reduced to a finite but exponential set of Pareto-optimal histories (in terms of counts of each event type), then to a polynomial set of Pareto-optimal event count vectors, and this eventually ends with minimum event cost histories given an event cost function. An inductive characterization of the solution space using different algebras for each granularity leads to efficient dynamic programming algorithms, ultimately ending with an

(This article belongs to the Section Combinatorial Optimization, Graph, and Network Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

A Linear Interpolation and Curvature-Controlled Gradient Optimization Strategy Based on Adam

by

Haijing Sun, Wen Zhou, Yichuan Shao, Jiaqi Cui, Lei Xing, Qian Zhao and Le Zhang

Algorithms 2024, 17(5), 185; https://doi.org/10.3390/a17050185 - 29 Apr 2024

Abstract

►▼

Show Figures

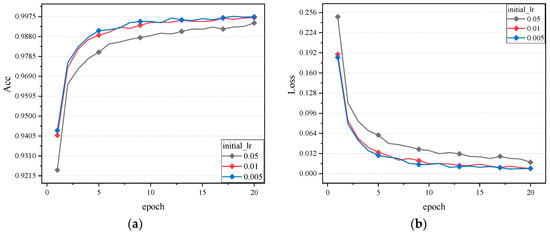

The Adam algorithm is a widely used optimizer for neural network training due to efficient convergence speed. The algorithm is prone to unstable learning rate and performance degradation on some models. To solve these problems, in this paper, an improved algorithm named Linear

[...] Read more.

The Adam algorithm is a widely used optimizer for neural network training due to efficient convergence speed. The algorithm is prone to unstable learning rate and performance degradation on some models. To solve these problems, in this paper, an improved algorithm named Linear Curvature Momentum Adam (LCMAdam) is proposed, which introduces curvature-controlled gradient and linear interpolation strategies. The curvature-controlled gradient can make the gradient update smoother, and the linear interpolation technique can adaptively adjust the size of the learning rate according to the characteristics of the curve during the training process so that it can find the exact value faster, which improves the efficiency and robustness of training. The experimental results show that the LCMAdam algorithm achieves 98.49% accuracy on the MNIST dataset, 75.20% on the CIFAR10 dataset, and 76.80% on the Stomach dataset, which is more difficult to recognize medical images. The LCMAdam optimizer achieves significant performance gains on a variety of neural network structures and tasks, proving its effectiveness and utility in the field of deep learning.

Full article

Figure 1

Open AccessArticle

Algorithm Based on Morphological Operators for Shortness Path Planning

by

Jorge L. Perez-Ramos, Selene Ramirez-Rosales, Daniel Canton-Enriquez, Luis A. Diaz-Jimenez, Gabriela Xicotencatl-Ramirez, Ana M. Herrera-Navarro and Hugo Jimenez-Hernandez

Algorithms 2024, 17(5), 184; https://doi.org/10.3390/a17050184 - 29 Apr 2024

Abstract

The problem of finding the best path trajectory in a graph is highly complex due to its combinatorial nature, making it difficult to solve. Standard search algorithms focus on selecting the best path trajectory by introducing constraints to estimate a suitable solution, but

[...] Read more.

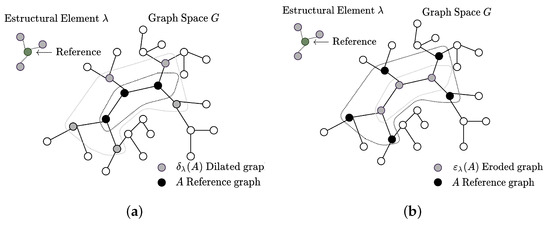

The problem of finding the best path trajectory in a graph is highly complex due to its combinatorial nature, making it difficult to solve. Standard search algorithms focus on selecting the best path trajectory by introducing constraints to estimate a suitable solution, but this approach may overlook potentially better alternatives. Despite the number of restrictions and variables in path planning, no solution minimizes the computational resources used to reach the goal. To address this issue, a framework is proposed to compute the best trajectory in a graph by introducing the mathematical morphology concept. The framework builds a lattice over the graph space using mathematical morphology operators. The searching algorithm creates a metric space by applying the morphological covering operator to the graph and weighing the cost of traveling across the lattice. Ultimately, the cumulative traveling criterion creates the optimal path trajectory by selecting the minima/maxima cost. A test is introduced to validate the framework’s functionality, and a sample application is presented to validate its usefulness. The application uses the structure of the avenues as a graph. It proposes a computable approach to find the most suitable paths from a given start and destination reference. The results confirm that this is a generalized graph search framework based on morphological operators that can be compared to the Dijkstra approach.

Full article

(This article belongs to the Section Algorithms for Multidisciplinary Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Advancements in Data Analysis for the Work-Sampling Method

by

Borut Buchmeister and Natasa Vujica Herzog

Algorithms 2024, 17(5), 183; https://doi.org/10.3390/a17050183 - 29 Apr 2024

Abstract

The work-sampling method makes it possible to gain valuable insights into what is happening in production systems. Work sampling is a process used to estimate the proportion of shift time that workers (or machines) spend on different activities (within productive work or losses).

[...] Read more.

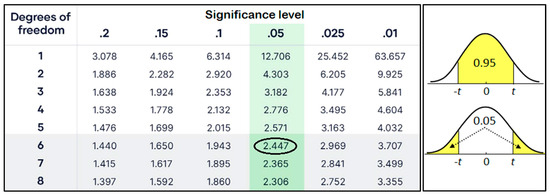

The work-sampling method makes it possible to gain valuable insights into what is happening in production systems. Work sampling is a process used to estimate the proportion of shift time that workers (or machines) spend on different activities (within productive work or losses). It is estimated based on enough random observations of activities over a selected period. When workplace operations do not have short cycle times or high repetition rates, the use of such a statistical technique is necessary because the labor sampling data can provide information that can be used to set standards. The work-sampling procedure is well standardized, but additional contributions are possible when evaluating the observations. In this paper, we present our contribution to improving the decision-making process based on work-sampling data. We introduce a correlation comparison of the measured hourly shares of all activities in pairs to check whether there are mutual connections or to uncover hidden connections between activities. The results allow for easier decision-making (conclusions) regarding the influence of the selected activities on the triggering of the others. With the additional calculation method, we can uncover behavioral patterns that would have been overlooked with the basic method. This leads to improved efficiency and productivity of the production system.

Full article

(This article belongs to the Special Issue Data-Driven Intelligent Modeling and Optimization Algorithms for Industrial Processes)

►▼

Show Figures

Figure 1

Open AccessArticle

Sub-Band Backdoor Attack in Remote Sensing Imagery

by

Kazi Aminul Islam, Hongyi Wu, Chunsheng Xin, Rui Ning, Liuwan Zhu and Jiang Li

Algorithms 2024, 17(5), 182; https://doi.org/10.3390/a17050182 - 28 Apr 2024

Abstract

Remote sensing datasets usually have a wide range of spatial and spectral resolutions. They provide unique advantages in surveillance systems, and many government organizations use remote sensing multispectral imagery to monitor security-critical infrastructures or targets. Artificial Intelligence (AI) has advanced rapidly in recent

[...] Read more.

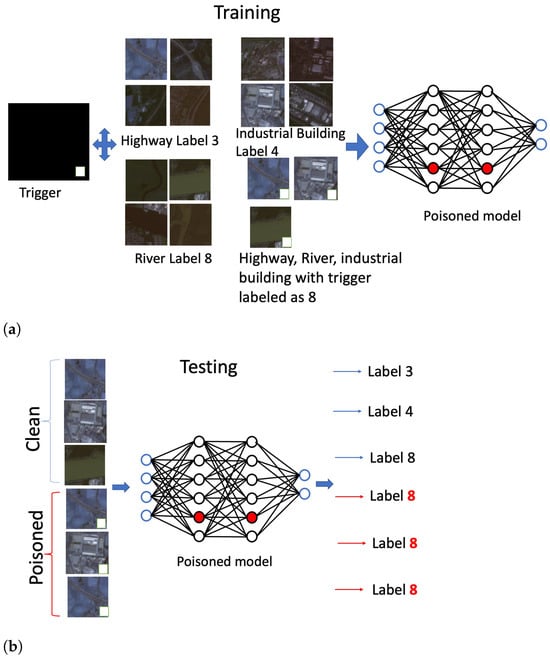

Remote sensing datasets usually have a wide range of spatial and spectral resolutions. They provide unique advantages in surveillance systems, and many government organizations use remote sensing multispectral imagery to monitor security-critical infrastructures or targets. Artificial Intelligence (AI) has advanced rapidly in recent years and has been widely applied to remote image analysis, achieving state-of-the-art (SOTA) performance. However, AI models are vulnerable and can be easily deceived or poisoned. A malicious user may poison an AI model by creating a stealthy backdoor. A backdoored AI model performs well on clean data but behaves abnormally when a planted trigger appears in the data. Backdoor attacks have been extensively studied in machine learning-based computer vision applications with natural images. However, much less research has been conducted on remote sensing imagery, which typically consists of many more bands in addition to the red, green, and blue bands found in natural images. In this paper, we first extensively studied a popular backdoor attack, BadNets, applied to a remote sensing dataset, where the trigger was planted in all of the bands in the data. Our results showed that SOTA defense mechanisms, including Neural Cleanse, TABOR, Activation Clustering, Fine-Pruning, GangSweep, Strip, DeepInspect, and Pixel Backdoor, had difficulties detecting and mitigating the backdoor attack. We then proposed an explainable AI-guided backdoor attack specifically for remote sensing imagery by placing triggers in the image sub-bands. Our proposed attack model even poses stronger challenges to these SOTA defense mechanisms, and no method was able to defend it. These results send an alarming message about the catastrophic effects the backdoor attacks may have on satellite imagery.

Full article

(This article belongs to the Special Issue Machine Learning Models and Algorithms for Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

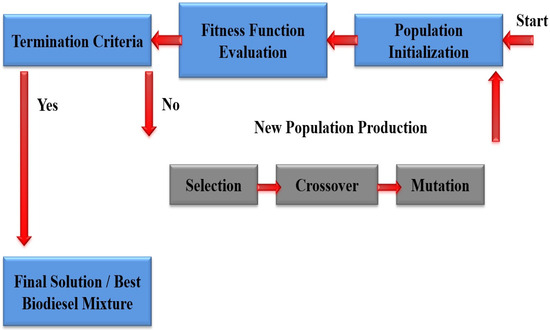

Application of Evolutionary Computation to the Optimization of Biodiesel Mixtures Using a Nature-Inspired Adaptive Genetic Algorithm

by

Vasileios Vasileiadis, Christos Kyriklidis, Vayos Karayannis and Constantinos Tsanaktsidis

Algorithms 2024, 17(5), 181; https://doi.org/10.3390/a17050181 - 28 Apr 2024

Abstract

The present research work introduces a novel mixture optimization methodology for biodiesel fuels using an Evolutionary Computation method inspired by biological evolution. Specifically, the optimal biodiesel composition is deduced from the application of a nature-inspired adaptive genetic algorithm that first examines percentages of

[...] Read more.

The present research work introduces a novel mixture optimization methodology for biodiesel fuels using an Evolutionary Computation method inspired by biological evolution. Specifically, the optimal biodiesel composition is deduced from the application of a nature-inspired adaptive genetic algorithm that first examines percentages of the ingredients in the optimal mixtures. The innovative approach’s effectiveness lies in problem simulation with improvements in the evaluation of the specific function and the way to define and tune the genetic algorithm. Environmental imperatives in the era of climate change currently impose the optimized production of alternative environmentally friendly biofuels to replace fossil fuels. Biodiesel in particular, appears to be more attractive in recent years, as it originates from renewable bio-derived resources. The main ingredients of the specific biofuel mixture investigated in this research are diesel and biodiesel (100% from bioresources). The assessment of the new biodiesel examined was performed using a fitness function that estimated both the density and cost of the fuel. Beyond the evaluation criterion of cost, density also influences the suitability of this biofuel for commercial use and market sale. The outcomes from the modeling process can be beneficial in saving cost and time for new biodiesel production by using this novel decision-making tool in comparison with randomized laboratory experimentations.

Full article

(This article belongs to the Special Issue Bio-Inspired Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

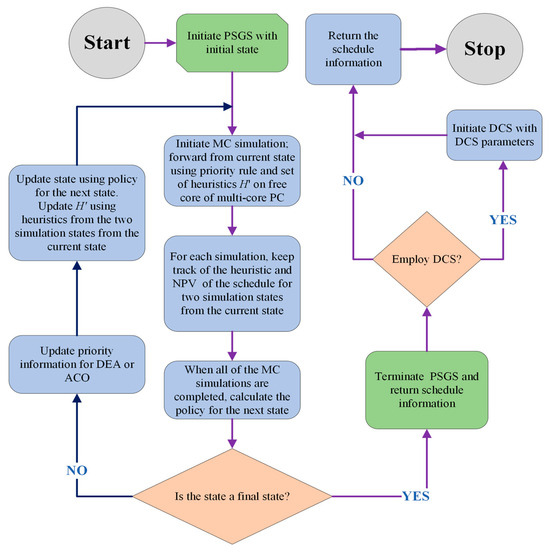

Maximizing Net Present Value for Resource Constraint Project Scheduling Problems with Payments at Event Occurrences Using Approximate Dynamic Programming

by

Tshewang Phuntsho and Tad Gonsalves

Algorithms 2024, 17(5), 180; https://doi.org/10.3390/a17050180 - 28 Apr 2024

Abstract

Resource Constraint Project Scheduling Problems with Discounted Cash Flows (RCPSPDC) focuses on maximizing the net present value by summing the discounted cash flows of project activities. An extension of this problem is the Payment at Event Occurrences (PEO) scheme, where the client makes

[...] Read more.

Resource Constraint Project Scheduling Problems with Discounted Cash Flows (RCPSPDC) focuses on maximizing the net present value by summing the discounted cash flows of project activities. An extension of this problem is the Payment at Event Occurrences (PEO) scheme, where the client makes multiple payments to the contractor upon completion of predefined activities, with additional final settlement at project completion. Numerous approximation methods such as metaheuristics have been proposed to solve this NP-hard problem. However, these methods suffer from parameter control and/or the computational cost of correcting infeasible solutions. Alternatively, approximate dynamic programming (ADP) sequentially generates a schedule based on strategies computed via Monte Carlo (MC) simulations. This saves the computations required for solution corrections, but its performance is highly dependent on its strategy. In this study, we propose the hybridization of ADP with three different metaheuristics to take advantage of their combined strengths, resulting in six different models. The Estimation of Distribution Algorithm (EDA) and Ant Colony Optimization (ACO) were used to recommend policies for ADP. A Discrete cCuckoo Search (DCS) further improved the schedules generated by ADP. Our experimental analysis performed on the j30, j60, and j90 datasets of PSPLIB has shown that ADP–DCS is better than ADP alone. Implementing the EDA and ACO as prioritization strategies for Monte Carlo simulations greatly improved the solutions with high statistical significance. In addition, models with the EDA showed better performance than those with ACO and random priority, especially when the number of events increased.

Full article

(This article belongs to the Special Issue Algorithms and Optimization for Project Management and Supply Chain Management)

►▼

Show Figures

Figure 1

Open AccessArticle

CentralBark Image Dataset and Tree Species Classification Using Deep Learning

by

Charles Warner, Fanyou Wu, Rado Gazo, Bedrich Benes, Nicole Kong and Songlin Fei

Algorithms 2024, 17(5), 179; https://doi.org/10.3390/a17050179 - 27 Apr 2024

Abstract

The task of tree species classification through deep learning has been challenging for the forestry community, and the lack of standardized datasets has hindered further progress. Our work presents a solution in the form of a large bark image dataset called CentralBark, which

[...] Read more.

The task of tree species classification through deep learning has been challenging for the forestry community, and the lack of standardized datasets has hindered further progress. Our work presents a solution in the form of a large bark image dataset called CentralBark, which enhances the deep learning-based tree species classification. Additionally, we have laid out an efficient and repeatable data collection protocol to assist future works in an organized manner. The dataset contains images of 25 central hardwood and Appalachian region tree species, with over 19,000 images of varying diameters, light, and moisture conditions. We tested 25 species: elm, oak, American basswood, American beech, American elm, American sycamore, bitternut hickory, black cherry, black locust, black oak, black walnut, eastern cottonwood, hackberry, honey locust, northern red oak, Ohio buckeye, Osage-orange, pignut hickory, sassafras, shagbark hickory silver maple, slippery elm, sugar maple, sweetgum, white ash, white oak, and yellow poplar. Our experiment involved testing three different models to assess the feasibility of species classification using unaltered and uncropped images during the species-classification training process. We achieved an overall accuracy of 83.21% using the EfficientNet-b3 model, which was the best of the three models (EfficientNet-b3, ResNet-50, and MobileNet-V3-small), and an average accuracy of 80.23%.

Full article

(This article belongs to the Special Issue Recent Advances in Algorithms for Computer Vision Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

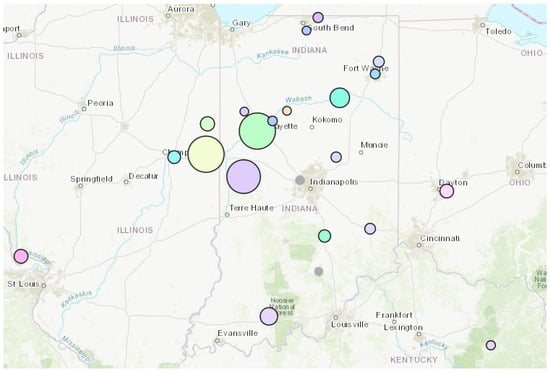

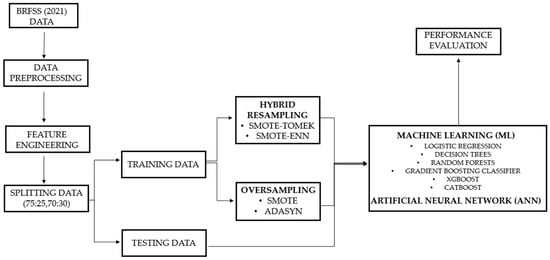

Strategic Machine Learning Optimization for Cardiovascular Disease Prediction and High-Risk Patient Identification

by

Konstantina-Vasiliki Tompra, George Papageorgiou and Christos Tjortjis

Algorithms 2024, 17(5), 178; https://doi.org/10.3390/a17050178 - 26 Apr 2024

Abstract

Despite medical advancements in recent years, cardiovascular diseases (CVDs) remain a major factor in rising mortality rates, challenging predictions despite extensive expertise. The healthcare sector is poised to benefit significantly from harnessing massive data and the insights we can derive from it, underscoring

[...] Read more.

Despite medical advancements in recent years, cardiovascular diseases (CVDs) remain a major factor in rising mortality rates, challenging predictions despite extensive expertise. The healthcare sector is poised to benefit significantly from harnessing massive data and the insights we can derive from it, underscoring the importance of integrating machine learning (ML) to improve CVD prevention strategies. In this study, we addressed the major issue of class imbalance in the Behavioral Risk Factor Surveillance System (BRFSS) 2021 heart disease dataset, including personal lifestyle factors, by exploring several resampling techniques, such as the Synthetic Minority Oversampling Technique (SMOTE), Adaptive Synthetic Sampling (ADASYN), SMOTE-Tomek, and SMOTE-Edited Nearest Neighbor (SMOTE-ENN). Subsequently, we trained, tested, and evaluated multiple classifiers, including logistic regression (LR), decision trees (DTs), random forest (RF), gradient boosting (GB), XGBoost (XGB), CatBoost, and artificial neural networks (ANNs), comparing their performance with a primary focus on maximizing sensitivity for CVD risk prediction. Based on our findings, the hybrid resampling techniques outperformed the alternative sampling techniques, and our proposed implementation includes SMOTE-ENN coupled with CatBoost optimized through Optuna, achieving a remarkable 88% rate for recall and 82% for the area under the receiver operating characteristic (ROC) curve (AUC) metric.

Full article

(This article belongs to the Collection Feature Papers in Algorithms and Mathematical Models for Computer-Assisted Diagnostic Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

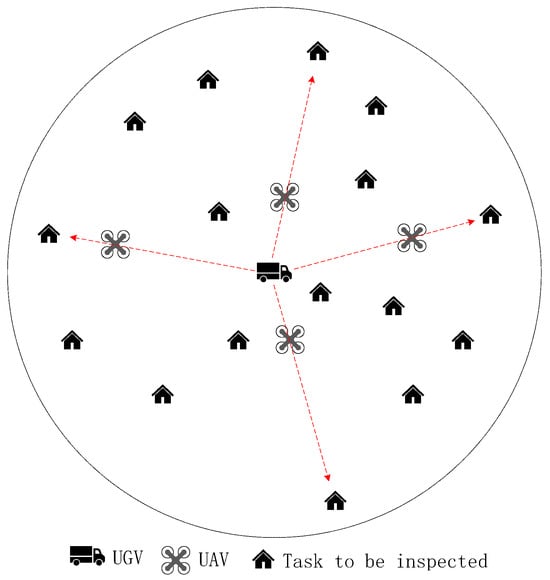

Mission Planning of UAVs and UGV for Building Inspection in Rural Area

by

Xiao Chen, Yu Wu and Shuting Xu

Algorithms 2024, 17(5), 177; https://doi.org/10.3390/a17050177 - 26 Apr 2024

Abstract

Unmanned aerial vehicles (UAVs) have become increasingly popular in the civil field, and building inspection is one of the most promising applications. In a rural area, the UAVs are assigned to inspect the surface of buildings, and an unmanned ground vehicle (UGV) is

[...] Read more.

Unmanned aerial vehicles (UAVs) have become increasingly popular in the civil field, and building inspection is one of the most promising applications. In a rural area, the UAVs are assigned to inspect the surface of buildings, and an unmanned ground vehicle (UGV) is introduced to carry the UAVs to reach the rural area and also serve as a charging station. In this paper, the mission planning problem for UAVs and UGV systems is focused on, and the goal is to realize an efficient inspection of buildings in a specific rural area. Firstly, the mission planning problem (MPP) involving UGVs and UAVs is described, and an optimization model is established with the objective of minimizing the total UAV operation time, fully considering the impact of UAV operation time and its cruising capability. Subsequently, the locations of parking points are determined based on the information about task points. Finally, a hybrid ant colony optimization-genetic algorithm (ACO-GA) is designed to solve the problem. The update mechanism of ACO is incorporated into the selection operation of GA. At the same time, the GA is improved and the defects that make GA easy to fall into local optimal and ACO have insufficient searching ability are solved. Simulation results demonstrate that the ACO-GA algorithm can obtain reasonable solutions for MPP, and the search capability of the algorithm is enhanced, presenting significant advantages over the original GA and ACO.

Full article

(This article belongs to the Section Evolutionary Algorithms and Machine Learning)

►▼

Show Figures

Figure 1

Open AccessReview

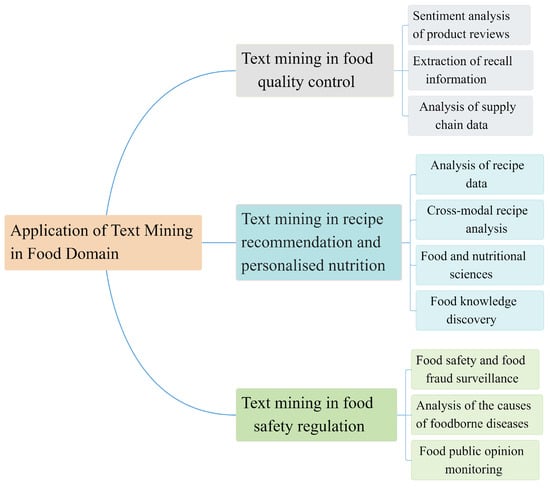

A Survey of the Applications of Text Mining for the Food Domain

by

Shufeng Xiong, Wenjie Tian, Haiping Si, Guipei Zhang and Lei Shi

Algorithms 2024, 17(5), 176; https://doi.org/10.3390/a17050176 - 25 Apr 2024

Abstract

In the food domain, text mining techniques are extensively employed to derive valuable insights from large volumes of text data, facilitating applications such as aiding food recalls, offering personalized recipes, and reinforcing food safety regulation. To provide researchers and practitioners with a comprehensive

[...] Read more.

In the food domain, text mining techniques are extensively employed to derive valuable insights from large volumes of text data, facilitating applications such as aiding food recalls, offering personalized recipes, and reinforcing food safety regulation. To provide researchers and practitioners with a comprehensive understanding of the latest technology and application scenarios of text mining in the food domain, the pertinent literature is reviewed and analyzed. Initially, the fundamental concepts, principles, and primary tasks of text mining, encompassing text categorization, sentiment analysis, and entity recognition, are elucidated. Subsequently, an analysis of diverse types of data sources within the food domain and the characteristics of text data mining is conducted, spanning social media, reviews, recipe websites, and food safety reports. Furthermore, the applications of text mining in the food domain are scrutinized from the perspective of various scenarios, including leveraging consumer food reviews and feedback to enhance product quality, providing personalized recipe recommendations based on user preferences and dietary requirements, and employing text mining for food safety and fraud monitoring. Lastly, the opportunities and challenges associated with the adoption of text mining techniques in the food domain are summarized and evaluated. In conclusion, text mining holds considerable potential for application in the food domain, thereby propelling the advancement of the food industry and upholding food safety standards.

Full article

(This article belongs to the Special Issue Machine Learning Algorithms and Optimization in the Digital Transition)

►▼

Show Figures

Figure 1

Open AccessArticle

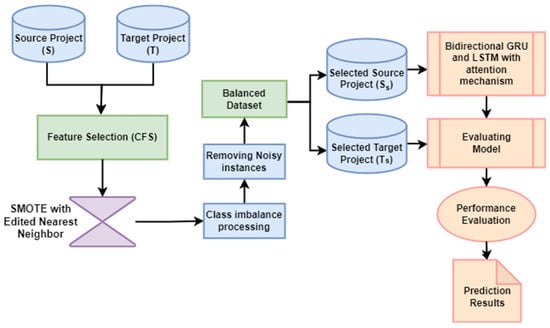

Cross-Project Defect Prediction Based on Domain Adaptation and LSTM Optimization

by

Khadija Javed, Ren Shengbing, Muhammad Asim and Mudasir Ahmad Wani

Algorithms 2024, 17(5), 175; https://doi.org/10.3390/a17050175 - 24 Apr 2024

Abstract

Cross-project defect prediction (CPDP) aims to predict software defects in a target project domain by leveraging information from different source project domains, allowing testers to identify defective modules quickly. However, CPDP models often underperform due to different data distributions between source and target

[...] Read more.

Cross-project defect prediction (CPDP) aims to predict software defects in a target project domain by leveraging information from different source project domains, allowing testers to identify defective modules quickly. However, CPDP models often underperform due to different data distributions between source and target domains, class imbalances, and the presence of noisy and irrelevant instances in both source and target projects. Additionally, standard features often fail to capture sufficient semantic and contextual information from the source project, leading to poor prediction performance in the target project. To address these challenges, this research proposes Smote Correlation and Attention Gated recurrent unit based Long Short-Term Memory optimization (SCAG-LSTM), which first employs a novel hybrid technique that extends the synthetic minority over-sampling technique (SMOTE) with edited nearest neighbors (ENN) to rebalance class distributions and mitigate the issues caused by noisy and irrelevant instances in both source and target domains. Furthermore, correlation-based feature selection (CFS) with best-first search (BFS) is utilized to identify and select the most important features, aiming to reduce the differences in data distribution among projects. Additionally, SCAG-LSTM integrates bidirectional gated recurrent unit (Bi-GRU) and bidirectional long short-term memory (Bi-LSTM) networks to enhance the effectiveness of the long short-term memory (LSTM) model. These components efficiently capture semantic and contextual information as well as dependencies within the data, leading to more accurate predictions. Moreover, an attention mechanism is incorporated into the model to focus on key features, further improving prediction performance. Experiments are conducted on apache_lucene, equinox, eclipse_jdt_core, eclipse_pde_ui, and mylyn (AEEEM) and predictor models in software engineering (PROMISE) datasets and compared with active learning-based method (ALTRA), multi-source-based cross-project defect prediction method (MSCPDP), the two-phase feature importance amplification method (TFIA) on AEEEM and the two-phase transfer learning method (TPTL), domain adaptive kernel twin support vector machines method (DA-KTSVMO), and generative adversarial long-short term memory neural networks method (GB-CPDP) on PROMISE datasets. The results demonstrate that the proposed SCAG-LSTM model enhances the baseline models by 33.03%, 29.15% and 1.48% in terms of F1-measure and by 16.32%, 34.41% and 3.59% in terms of Area Under the Curve (AUC) on the AEEEM dataset, while on the PROMISE dataset it enhances the baseline models’ F1-measure by 42.60%, 32.00% and 25.10% and AUC by 34.90%, 27.80% and 12.96%. These findings suggest that the proposed model exhibits strong predictive performance.

Full article

(This article belongs to the Special Issue Algorithms in Software Engineering)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Algorithms Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Computation, Entropy, Fractal Fract, MCA

Analytical and Numerical Methods for Stochastic Biological Systems

Topic Editors: Mehmet Yavuz, Necati Ozdemir, Mouhcine Tilioua, Yassine SabbarDeadline: 10 May 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Algorithms, Axioms, Fractal Fract, Mathematics, Symmetry

Fractal and Design of Multipoint Iterative Methods for Nonlinear Problems

Topic Editors: Xiaofeng Wang, Fazlollah SoleymaniDeadline: 30 June 2024

Topic in

Algorithms, Computation, Information, Mathematics

Complex Networks and Social Networks

Topic Editors: Jie Meng, Xiaowei Huang, Minghui Qian, Zhixuan XuDeadline: 31 July 2024

Conferences

Special Issues

Special Issue in

Algorithms

Bio-Inspired Algorithms

Guest Editors: Sándor Szénási, Gábor KertészDeadline: 20 May 2024

Special Issue in

Algorithms

Algorithms for Smart Cities

Guest Editors: Gloria Cerasela Crisan, Elena NechitaDeadline: 31 May 2024

Special Issue in

Algorithms

Algorithms for Games AI

Guest Editors: Wenxin Li, Haifeng ZhangDeadline: 20 June 2024

Special Issue in

Algorithms

Recurrent Neural Networks: algorithms design and applications for safety critical systems

Guest Editor: Grazziela Patrocinio FigueredoDeadline: 30 June 2024

Topical Collections

Topical Collection in

Algorithms

Feature Papers in Algorithms for Multidisciplinary Applications

Collection Editor: Francesc Pozo

Topical Collection in

Algorithms

Feature Papers in Randomized, Online and Approximation Algorithms

Collection Editor: Frank Werner

Topical Collection in

Algorithms

Featured Reviews of Algorithms

Collection Editors: Arun Kumar Sangaiah, Xingjuan Cai